HALCON Progress 25.11

The new HALCON Progress 25.11 release is a Progress edition update. This new version brings a range of advanced features that further enhance performance, flexibility and usability of HALCON for industrial and embedded vision applications.

Download MvTec HALCON 25.11 HERE

Find MVTec’s HALCON Guides and Tutorials HERE

Watch the HALCON 25.11 Webinar HERE

Read About the Major Features of HALCON Progress HERE

Introducing HALCON Progress 25.11 – New Capabilities for Smarter Vision Systems

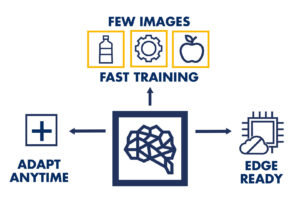

CONTINUAL LEARNING – CLASSIFICATION

HALCON 25.11 introduces a new technology that enables creating and updating classification models with minimal data per class and without full retraining. This allows adaption to changing production conditions, including on edge devices with limited computing power.

Unlike conventional deep learning, this approach prevents catastrophic forgetting and keeps maintenance effort low. Based on MVTec’s pretrained models optimized for industrial scenarios, applications can be updated quickly without full retraining.

SCORE VISUALISATION FOR SHAPE MATCHING

Users gain clear visual feedback on shape matching by seeing how individual model parts contribute to the result—making model setup and optimisation far more intuitive, even for less-experienced users.

By configuring color-coded bins, users can immediately see which areas match well and which perform poorly, for example due to shadows or unwanted textures. This visual feedback makes it much easier to refine models, remove problematic parts, and optimize applications – a major usability advantage especially for non-expert users.

OPTIMISED DEEP OCR MODELS

The new release delivers OCR models that are faster (up to ~50× improved inference time on embedded devices), more resource-efficient and still highly accurate—ideal for inline ID reading, label verification, lot tracking and other high-volume tasks.

Thanks to their optimized architecture, they enable real-time OCR applications on low-power devices while maintaining high accuracy. This makes the models ideal for demanding inline applications such as serial number inspection, label verification, or lot tracking OCR tasks, across industries from logistics and packaging to pharmaceuticals, consumer goods, and medical technology.

MOBILENETV4 CLASSIFICATION MODELS

HALCON 25.11 adds support for the efficient MobileNetV4 deep learning architecture for classification and object detection—tailored for resource-constrained and edge systems.

Users benefit from fast inference times, lower system costs, and straightforward integration into existing HALCON projects. All models are pretrained by MVTec, ensuring strong performance for various downstream tasks such as quality inspection, product classification, presence detection, and surface defect analysis. Typical industries include automation, electronics, packaging, food, and medical technology.

VARIOUS CODE READING AND PRINT QUALITY INSPECTION IMPROVEMENTS

Enhanced QR code detection (especially on curved/deformed surfaces), improved bar code reading and added compliance with the latest print-quality standards boost reliability across logistics, packaging, food and medical applications.

BUILT-IN SBOM’S FOR EASIER COMPLIANCE

HALCON 25.11 now includes Software Bills of Materials (SBOMs) delivered as SPDX JSON files, making regulatory compliance, vulnerability licensing and lifecycle management significantly simpler. By providing SBOMs directly with HALCON, MVTec simplifies compliance and reduces workload for customers.

LATEST PREVIEW VERSION OF HDevelopEVO 25.11

With HDevelopEVO 25.11, MVTec introduces the first preview of the HALCON Script Engine, the successor to the HDevEngine. It provides a runtime environment for executing HALCON Script files created in HDevelopEVO. The HALCON Script Engine can initially be integrated into applications via a C++ API. Further interfaces such as .NET and Python are planned for future releases. This bridges the gap between prototyping in HDevelopEVO and productive use in custom solutions.

How can the Latest Version of Halcon help you?

With HALCON 25.11, vision system integrators and OEMs gain more powerful tools for tackling complex inspection, automation and embedded use-cases. The enhancements mean faster deployment, easier adaptability, better performance on edge devices and stronger support for regulatory and quality demands. This release will open new opportunities to optimise your vision systems and reduce development maintenance effort.

Want to learn more?

Explore the full potential of HALCON Imaging Software. Download the brochure, start a trial version, or Contact our Software Specialists at Multipix Imaging to discuss your all your machine vision requirements.